Deconstructing the `CAP theorem' for CM and DevOps

Part 1: The Special Theory of Relativity for distributed systems

As software engineering and operations forge a new cultural bond around continuous improvement of applications and infrastructure, the database is something "dev" and "ops" have in common -- and there are things to learn from both perspectives on distributed data.

In computing, the so-called CAP theorem (1999-2002) has become both an icon and a bone of contention in the world of databases -- a supposed truth about distributed systems. A lot has been written about it since it was formulated, especially around the recent debates on `SQL/noSQL', but its many hearsay formulations are beset with a number of problems.

In this essay (in two parts), I want to explain the issues around CAP more carefully and add a broader perspective from the viewpoint of time. The aim is to talk about its potential impact on the world of infrastructure operations. I shall try to pick apart what CAP is about, what it does and doesn't mean, and what is important to understand in the discussion for DevOps.

[PART 1][PART 2]

Synopsis"I wrote you a long letter, as I didn't have time to write a short one."

|

Relativity

A plague upon us! Distributed multi-threading! Parallelism! Frames of reference!

Wrapping our heads around behaviours that happen in parallel, at multiple locations, and from different available views, is hard. In science, the analysis of this is called relativity theory, and many of the great minds of philosophy have struggled with versions of it, including Galileo, Einstein and Kripke. The problem is no easier in computing, but in distributed systems we have to do it all the time.

The so-called CAP theorem was a trigger that unleashed a public discussion about this in the distributed database world, and today its name is brandished both as a badge of honour, and even as a weapon, in debates about consistency of viewpoint in distributed systems -- what Einstein called simultaneity, and what databasers call distributed consistency.

Stories about simultaneity generally end up with the same answer in these theories: simultaneity is not a helpful concept in the world, and multiple agents, players, actors, or whatever we would call them, are doomed (indeed encouraged) to see the world from unique perspectives, and be contented to disagree about its consistency. Why? Because ultimately it is the responsibility of each observer of the world to find their own consistency from their own perspective, and based on what they can observe impartially about it. Sometimes, we will be able to calculate others' perspectives from our own, but only within the limits of communication, and no faster than the rate at which messages can be sent.

There is much to be learned from thinking about relativity (and I don't mean just Einstein's famous kind), and with all the attention given to CAP lately, I think that this point of view can help us to understand what CAP does and doesn't mean in a wider range of contexts than just databases. My plan then is to visit the issues, as originally presented for databases, and then see what this means in general for distributed infrastructure.

Brewer's conjecture

Originally a conjecture made during a keynote presentation in 2000 [1,10] (see numbered references at the end), what we refer to today as the "CAP theorem" was as a reaction to the developments in database clustering in the 1990s. "Brewer's conjecture", as Lynch and Gilbert called it[3], was a remark about how certain trade-offs must be made about a user's expectations of consistency and availability of information (see his later perspective on this in [2]). Lynch and Gilbert made a theorem inspired by it, in a paper in 2002[3].

Brewer's simple observation was that hoping for a perfect world with global and consistent viewpoints on knowledge was subject to certain limitations, especially if the parts of that world were distributed in a network with potentially imperfect connectivity. This much is indisputable.

The reading list at the end of this article is well worth digesting to get a full account from a variety of perspectives. I will not add much new to these articles, but hope for a slightly more plausible formulation of the issues, using Promise Theory as my guide.

Is it a theorem?

The first question to ask is: is the CAP theorem a real theorem? Alas it is not; it remains a "truism" or a hypothesis that is unprovable. Brewer's original conjecture has not been proven with mathematical rigour -- indeed, the formulation in terms of C, A and P is too imprecise for that to happen. So the status of CAP as a theorem is something of an urban myth, but read on.

The CAP conjecture motivated something following the forms of a theorem in [3], and by that we mean that -- for a given set of assumptions and definitions, a partial proof was demonstrated supporting about 1/6th of the asserted conjecture. However, if you read [3], you will see that what is proven is not really what is stated as being the CAP conjecture, Also, as a piece of scientific work, the paper is somewhat difficult to understand today, because it builds on a very particular set of unstated assumptions, jargon and mode of thinking that was rooted in the time of its writing. Moreover, it provides no precise definitions of any of the quantities referred to, particularly the much-discussed "P" ("tolerance" of packet loss). Part of the problem is that the conjecture is about network fault tolerance, but it never models the network at all.

So, as theorems go, [3] is not high art, either in its formulations or its proof technique, and what it proves is only implicitly related to the CAP conjecture, making the paper of limited value for posterity. But all is not lost.

In spite of this, CAP itself is not useless, as it has served the ultimate purpose of science: to stimulate thought. Various authors (see reading list) have proposed modifications or simplifications of CAP that bring different insights. Many of these viewpoints reflect the search for clarity in formulating the question itself -- which is what science is for.

Perhaps more seriously, "The CAP Theorem" is often touted as a reason for making certain technology trade-offs in actual systems, and thus we expect statements about it to not misrepresent reality. It is particularly cited in current discussions about "noSQL" databases, who have challenged traditional database transaction lore, even stirring up great offense amongst unilateralists. But let's be clear, even a fully proven theorem is not necessarily a justification for doing anything, it is simply a hopefully clear statement about something that tries to establish the boundaries of truth, within a framework of assumptions. What we do with that truth, and the extent to which we hold it as an authority, is our own business. So, with all that said, let's try to figure out what it all means, and how we can respond to it.

What is it really about?

The clearest exposition of what was intended by vintage CAP seems to be in [10], and we can retrofit the idea, without worrying about proof. Suppose we hand-wave the following ideas about data across a distributed system (imagine a number of distributed agents sharing data with one another, and with different parts of the system getting data updates randomly):

- C: consistency/uniformity of data at all locations (no one lags behind updates once they have been made somewhere within the system - there is only one value for each key globally).

- A: availability of the data service (we can access data in a reasonable time)

- P: partition tolerance (if we break up the system into pieces that can't communicate, it continues to work "correctly")

If you think these ideas are vague, you are not alone. They are certainly unsuitable for theorem proving. For instance, are we talking about a single observer (user) making requests and a back-end of many servers with different data, or are we talking about replicated data and multiple users? The distinction turns out to matter for other definitions. Is the user considered to be part of the system or outside of it? How is a user different from, say an aggregator (like a front-end web-tier to a distributed database). These questions actually matter if you are going to formulate something precise enough to prove. In fact, much confusion seems to arise from thinking too much in terms of interfaces (APIs perhaps) as if they were adequate representatives of the underlying system.

Availability. For instance, "A" means the availability, but of what? The entire database, or just one particular part of it we need? How long should we wait for a response from a server before saying it is unavailable? What is the acceptable latency? If the whole thing goes away, then that seems to give a completely consistent view of the data (nothing). If only parts we don't need go away for a while, that would not affect an end-user, but it would be formally inconsistent. If a redundant replica (like a secondary DNS server) does not agree with the master source then it is not consistent, but it might be good enough for a while. When we think about what availability means, partial or global, we immediately wonder what consistency means.

Fig 1. Availability of all or part of the data, or agents.

What goes missing affects what we can say about consistency.

A similar problem comes up with "P" or partition tolerance. A partition is easy to define: a loss of connectivity, or some packet loss in the network. but tolerating that situation by behaving "correctly" is fraught with ambiguity -- again, the seriousness of situation and the "correct behaviour" depends on the kind of system we are thinking of.

Horizontal partition tolerance Suppose we have multiple data stores for load balancing, or backup (see fig 2), that are supposed to be synchronized. If an end user has access to both source and replica, but source and replica are partitioned (e.g. a firewall or bad network route), then the observer could see different data about the same keys/values. This kind of thing happens in DNS all the time, of course, and we live with it. Caching of data values anywhere leads to this issue. In a database about bank transactions, the problem is usually considered to be more serious.

Fig 2. Partition on internal consistency (can't back up or secure data redundancy).

We can still reach data, but if some systems suddenly failed, the backup would be out of sync.

The user might even be able to see both versions.

| Transaction Locking or "atomicity" is the classical answer to consistency versus availability, i.e. copy-on-write (lock read/write access everywhere, update all copies, release lock) is the usual answer to distributed data. This introduces a lot of forced unavailability of data as distributed waiting grinds the system to a halt to brute-force global consistency. |

Vertical partition tolerance If the partition actually prevents us from accessing any sub-part of a primary source (e.g. if we have horizontal scaling on the back end because a single server just can't contain all the data, or say multiple sources located in different countries), then we may not have access to certain keys/values. Then this kind of partition must lead to partial availability, and as long as there is no overlap between the source shards, there is also consistency within the partial system that a reader/observer/user can observe.

Fig 3. Partition on external availability (can't get complete access to data)

but the part we see can still be self-consistent.

Clearly partitioning and availability cannot be totally separate concepts either, and somewhere in here is the essence of CAP. So, at this point, let's simply cut to the chase and write down the intuitive formulation of CAP from [10], then we can retrofit meaning to C, A and P. CAP says that we can only have 2 out of 3 of these properties simultaneously, meaning there are 3 kinds of distributed system (or three phases) we can make:

- CA without P (local consistency, no errors)

If we can't be fault-tolerant of a partitioning (communication breakdown) in the system, then we can still have consistency and availability of the data shared by agents within each partition, by ignoring other partitions. e.g. if we put a firewall in the middle of a distributed system then we can't expect data to be consistent across this partition, but on each side agents can still share and harmonize their data values so that they are locally consistent by talking to each other. This is local rather than global consistency.

In this configuration, we mean: local C for a partial system, 100% A for the partial system, and no P does not exclude several partitions from existing with their own internal CA. Partitioning thus means having multiple independent systems with 100% CA that do not need to interact (a trivial case).

- CP without A (transaction locking)

If a system is allowed to not answer requests at all (turn off "A"), we can have the artificial consistency by moratorium on change. We claim to tolerate partitioning/faults, because we simply block all responses if a partition occurs, assuming that we cannot continue to function correctly without the data on the other side of a partition. Once the partition is healed and consistency can once again be verified, we can restore availability and leave this mode.

In this configuration, we mean: global C, and global correct behaviour in P is to block access to replica sets that are not in synch -- this requires forcibly blocking "A". CAP suggests that this "no A" affects the whole database, but it only need affect the data affected by the partition. In order to tolerate P at any time, we must be ready to dispense with A at any time for global consistency, so the use of terms P and A refer to 100% assurance, but the remedy can still be local. This is basically the transaction lock.

- AP without C (best effort, fault tolerant)

Finally, if we don't care about about global consistency (i.e. simultaneity), then every part of the system can make available what it knows. If there is a partition, then each part might be able to answer someone, even though the system as a whole has been broken up into incommunicable regions. In that sense one can tolerate partitioning of the total system by caching and not caring about whether all data are entirely up to date.

In this configuration without C means without the assurance of global C at all times. The system might be consistent, but we cannot verify it -- we just don't know. When we claim A and P together, we have to mean partial availability within a partition. If we assume that agents can cache data values, then this might be equivalent to CA without P if agents are not completely isolated.

We see that the terms C, A and P are used to mean quite different things in each of the cases, making it very hard to use them in any proof. But the intended meaning is starting to become clearer.

What is wrong with this story?

On the surface, there seems little wrong with this at the gut level, modulo some sloppy terminology, but questions jump out at you from these 3 supposed cases. Does availability mean 100% availability at all times (to make it consistent with the idea of partitioning)? Does A take into account caching or redundancy, which further complicates the discussion of freshness or consistency of data?

At the root of the discussion of consistency is a naive view of time itself, and the absence of a model of the network infrastructure on which the whole thing depends. Availability is a question of time limits: when do we give up trying to get an answer? How long does one of the three CAP phases last? Can we go from one phase to another at some moment in time?

The entire formulation is also typically static, in the way that the world of theorems tends to be. It pretends that the system moves from one crystalline state to another, in such a way that the same perfect view can be available to everyone in the system simultaneously. This is a deeply flawed view, which is ultimately a physical impossibility because of the finite speed of information.

The definition of consistency is a problem. It makes no clear promise but has an implicit assumption of verification through continuous look-up. It is missing a model of user expectation, over what time-scale is this consistency required? A real distributed system is a messy melange of change happening at random locations at random times. Consistency requires a more sophisticated model that takes into account the change processes. Are we doing everything as fast as possible in case of sudden failure, or do we really need to look up data that fast?

The confusions arise from the lack of separation of concepts, and the absence of a model of time and space (where and when) and signal propagation. CAP is all about networks, but the one thing it doesn't have is a model of the network itself.

In other scientific theories of relativity, the punch line has always been that global consistency is impossible because of limitation on the rate of communication. The same will be true here. So at one level, the problem is that one wastes a lot of time talking about something that doesn't exist. Ultimately, in the most extreme distributed system we would literally have to deal with Einsteinian relativity, and we would find that the CAP model of consistency is literally impossible to achieve.

A promise theory view of the relativity of knowledge

Let's start again and try to get a little clarity around the concepts. My tool of choice is Promise Theory, which is a framework I developed originally for describing CFEngine (a system that has to deal with all of these issues), and which handles CAP-like questions simply and clearly. Promise Theory [12] was introduced to offer an atomic theory of distributed systems, making only the most elemental assumptions about agents. (For a more complete discussion of distributed data and consistency in promise theory, see [13].)

Without getting too technical, let's try to see if we can define C, A and P somewhat better, taking into account time and space. Whether or not a system is available or consistent, etc, is an assessment that any agent within a distributed system should be able to make based on the evidence it can observe. Consistency will be about whether agents (can) make the same assessments. Considering different observational viewpoints brings us back to relativity, and promise theory has a few principles to help us.

- An agent can only make promises about its own behaviour.

- It can make promises about its behaviour that rely on other agents' cooperation, conditional on them keeping their promises.

- Each agent knows only its own state, and any information it promises to assimilate from other agents, which in turn promise to share their own state with it.

- All promises are statements of best effort and represent the best outcome of the system, but do not offer any guarantees.

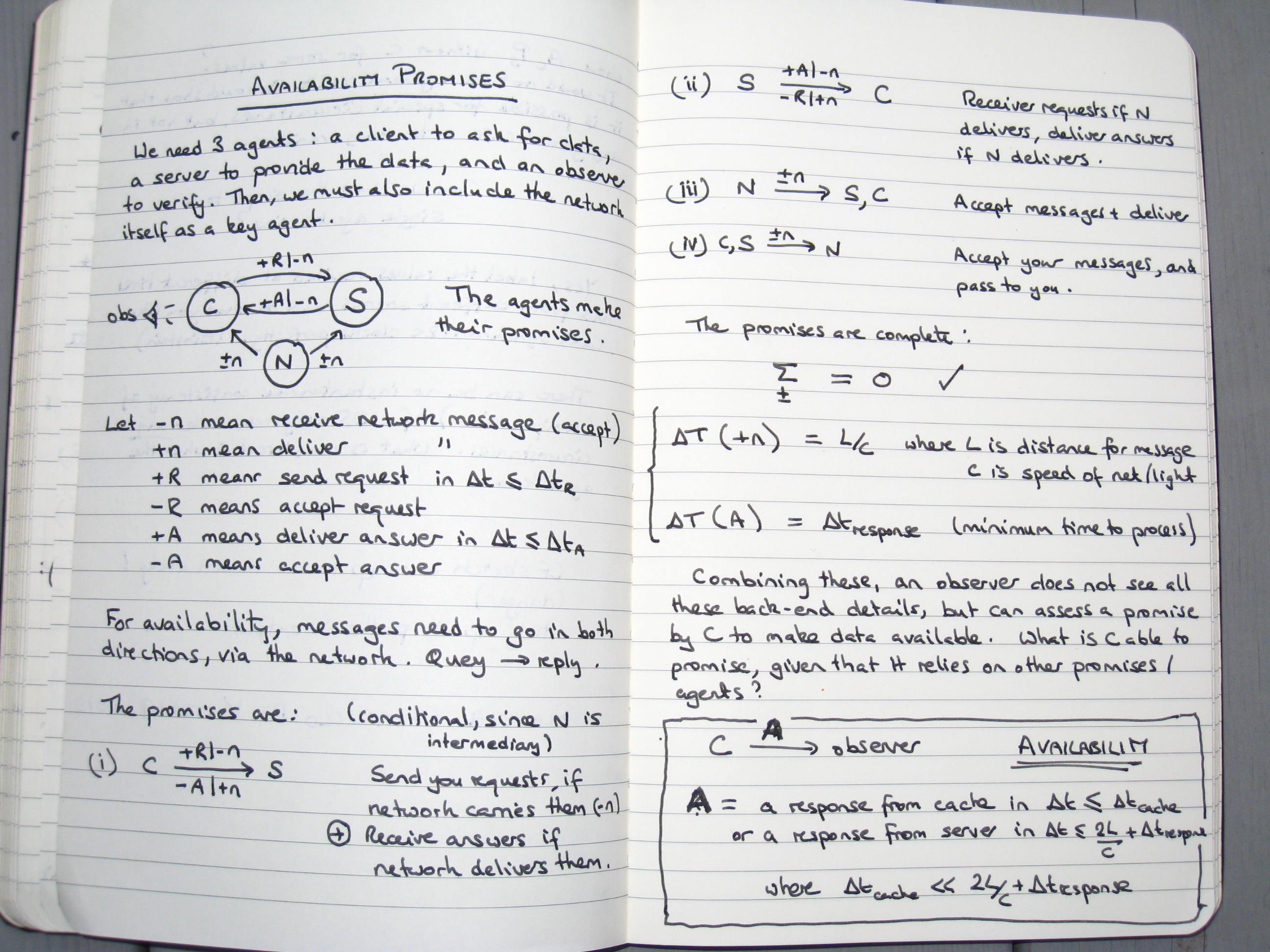

To use promise theory, we try to construct a promise for some desired property without breaking these rules. Availability is fundamental to being able to measure the state of data at different locations, so let's start with that.

- A is for Availability

To make a promise theory model, we need to include all the agents that play a role in providing access to data. We can keep this simple and take the following agents, providing keeping `services' promises in this interaction.

- C: A client who will make a request for data/state and give up waiting for a response after a time interval Δtmax. This limit is the policy that distinguishes available from unavailable.

- S: A server that will answer that request at a time of its choosing.

- N: The network that promises to transport the data in between, at a time and rate of its choosing.

- O: An observer to whom the answer would be reported by the client (as a final arbiter).

Each of these agents can only make promises about their own behaviour. See my notes in the figure below about how to construct this interaction.

From my notebook: How to define availability with promise theory.

What independent agents can offer and channel for others.

Availability can, at best, be promised with a time delay.What we get out of this analysis is the following conclusion. An agent S is said to be available to another agent C, iff C receives a reply to its request from S within a finite amount of time Δt < Δtmax. Notice that, in accordance with promise theory, this definition is purely from the observable viewpoint of the agent C and so makes no assumptions about what happens outside of C. It takes the promises made by other other agents under advisement and makes its own promises conditionally on the assumption that they will try to keep their promises. The definition has to refer to time, as this is the only way to resolve whether a reply has been received or not. At what point do we stop waiting to know the answer? C must have some kind of internal clock to make this assessment, else we have no way of measuring availability.

In this example, I added the observer as a separate agent to show the possibility of caching information from S locally at C. The observer sees cached information as available, because it replies quickly, but we then have to worry about whether the cache is consistent with the value of the remote data. We see easily that availability and consistency need each other in different ways at different places and times -- it does not makes sense to talk about the availability of the whole system, but only of the agents individually. Availability of S is needed to establish consistency of data at C, and availability of C has to be curtailed to guarantee that the observer cannot see inconsistent values between cache and that retrieved from S.

According to the rules of promise theory, we would model the smallest part of the system that can change independently as a separate agent that makes individual promises. Thus, for a database, this tells us that the availability promise applies to each data value represented here by S independently, not the `whole database' interface, represented by C. So PT tells us to lock individual data -- we just rediscovered transaction locking. In conclusion: yes, we can define availability, in a way conducive to talking about distributed data. To do so, we need to refer to some form of independent real time, distinct from transaction counting.

- C is for Consistency

The intended meaning of consistency in CAP seems to be the following. If information or knowledge K is recorded as having some value K →V, at some global time t1, by any agent in a distributed system, then at any later time t2 > t1, all agents will see K → V (both the fact that it exists and has a fixed value).

Notice that to promise consistency, any agent needs to agree about the global time at which changes occur on another agent, i.e. they need to be able to agree about arbitrary points in time, or effectively have a common clock.

If there is some number of users (consumers) of data C, and a single provider S, as in the availability discussion above, this seems easy (but read on). The provider S promises to provide the latest value, or nothing. Then consistency is trivially achieved, there is only one value at a time which is V or "failed" in the case of a fault. If multiple processes are changing the data in parallel, transaction locking at S. All the promises we need to make this happen were made in the analysis above.

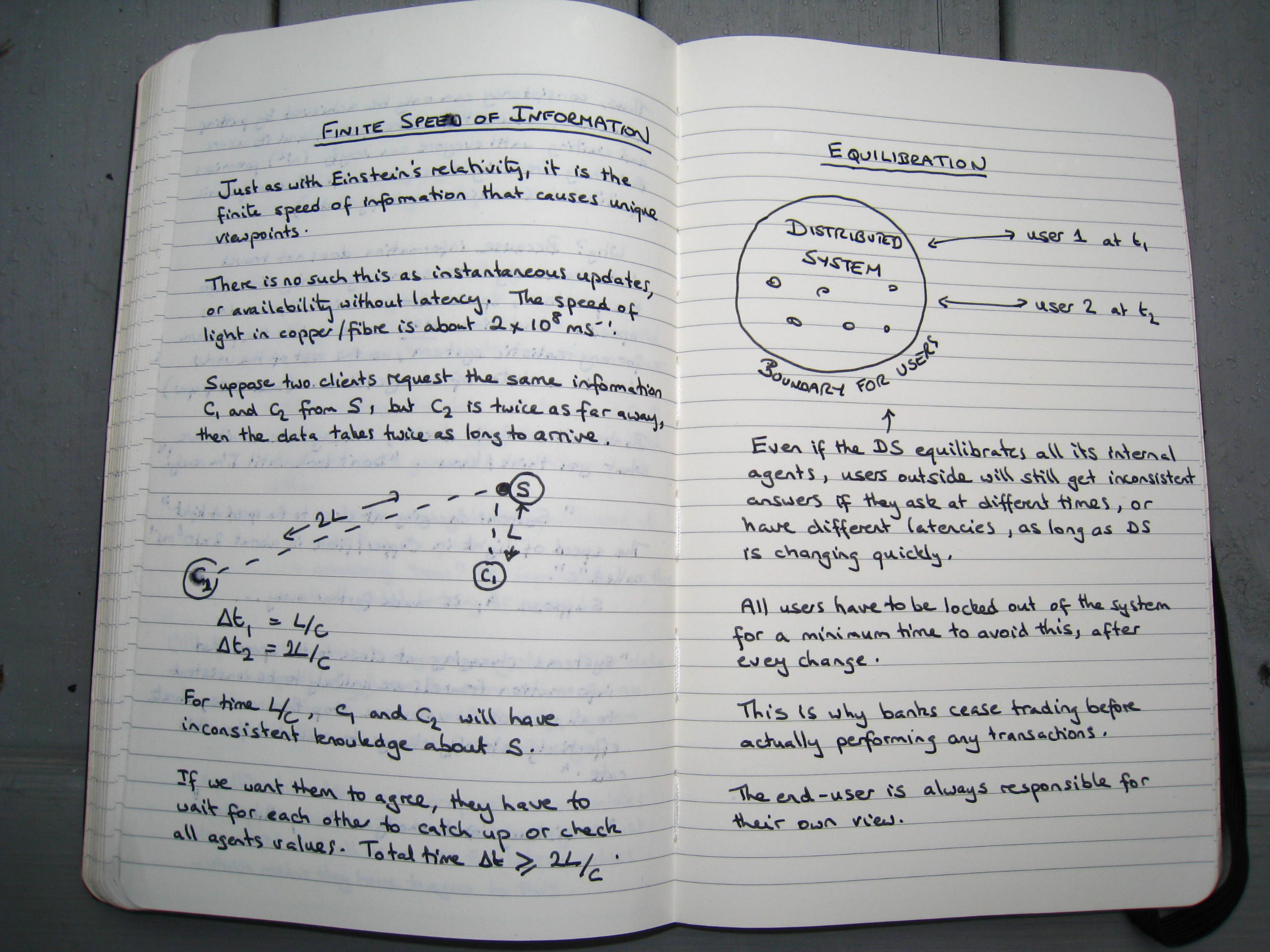

But now, suppose that we have several users looking at the same information, but with different latencies. Suddenly information is propagated to different locations at different rates, and the global view is lost. Global time does not help us at all because the finite speed of information-transfer leads to inevitable delays. No one can compare their results at any theoretical "same time". At best they could agree to compare results averaged over a coarse grain of time, or a time interval "bucket".

To regain a global view, we can, of course, wait again until all the parts catch up. This is a process we can call equilibration, as we are most familiar with in thermodynamics. To reach equilibrium, all N agents in a system need to coordinate and agree on the same data values. The final state is then called a state of maximum entropy, meaning that the same average state is maximally distributed -- and it is this that CAP wants to have (see my notes in the figure below). This takes time during which the data have to converge to a static value. If any other outside data are coming in causing confusion, this would interfere with the harmonization and make the result non-deterministic.

From my notebook: Consistency is not automatic - it depends on where/when.

Distributed consistency takes time, it is a thermodynamics equilibrium.However, this determinism comes at a price. By forcing any other change to wait for equilibration of the existing store, we distort the system's record of the time at which data are allowed to enter, because we are blocking and forcing things that want to be parallel into a serial stream. If the data represent a quickly changing source, then the meaning of the data is utterly compromised by that waiting.

Just as availability could only be promised by a recipient to an observer, conditionally on matters beyond its control, so consistency (being fundamentally a stateful function of availability) can only be promised to an observer -- in an aggregate view -- subject to a trust in other agents' promises to correctly report on all the copies of the data around the network; and this is further subject to a minimum delay inherent in the finite speed of information transfer. In other words, the best promise we can make is: if you wait t seconds, I promise the data can be uniform, assuming that all agents honoured their promises to block further changes. In this sense, all consistency has to be "eventual" (Re: ACID/BASE) in the sense that a delay must be introduced somewhere. Banks do this deliberately, of course, when they cease trading at the end of each day and force customers to wait three working days for transaction verification, in spite of modern communications. In some circumstances, one might place this determinism ahead of accuracy of timing, but there is a trade-off here too. However we choose to define consistency, we have to deal with distortion of the history of data and/or artificial slow-down in capture performance.

There is one final point that I have never seen discussed: why are the end users not expected to have consistent knowledge too? If we really care about consistency, then what about the consumers of information from the distributed data? Do we put a lot of effort into making all the data in a database be the same at the same moment in time and then not control the time at which users look for answers?

If one user looks up data earlier than another, or from a greater distance than another user, the latency can easily lead to them reading different values. The technology does nothing to help them resolve this issue (if they actually care). They are on their own. Is this itself not an inconsistency in the insistence on consistency?

If your head is now spinning, then you are not alone. Relativity is hard. Even the Nobel committee refused to award Einstein for his work on this subject as it was too controversial at the time.

In summary:

- Consistency does not happen by itself, because the finite speed of information makes different values current at different times and places.

- Data values must come to equilibrium by cross checking the values at different locations.

- We have to deny access to the entire system until all values are trusted or verified to be the same. This requires us to choose an arbitrary boundary at which we stop caring.

- What is the entire system? Does it include the end users?

Git

An example that illustrates the issues nicely is the distributed versioning system git where the full distributed system is actually intended to be inconsistent. Different branches or "partitions" have very different data. There is no sense in which a user could look at an arbitrary user's repository and expect to see a consistent view of the whole. But one could imagine that, if changes ever stopped happening, all the different versions could be merged into a single state of equilibrium. Whether or not this happens, it is perfectly possible for users to have a useful and locally consistent data experience without being forced into global consistency.CFEngine

CFEngine promises to provide samples of distributed systems with 5 minute resolution at all times. Since the latency of the network cannot be determined uniformly, all data values are stored with their "last seen" time and location, indicating when they arrived within the local view and from which IP address/context.The conclusion is that consistent use of the data is entirely up to the end-user no matter what happens inside the distributed system. Since the end-user has to take responsibility for the data anyway, this surely means that actual use of the data cannot be strongly affected by whether there is complete distributed consistency within the distributed database, because it is only what happens inside the end-user that matters. The end user's experience depends only on the specific history of observations it has experienced, not on the global state. This is basically the argument made by Rich Hickey in [11] and Nathan Marz [8]. As long as we label data with their spacetime coordinates (location and sample time), a consistent computation can always be carried out. This is a move from single values to tuples that label values with space-time context.

V → (t, x, V)

This is exactly what Einstein had to do to make sense out of high speed travel. This is called a frame of reference. The strategies for maintaining consistency (copy on write, read/write locking, atomicity, etc) are all about setting a boundary between The System and The User, i.e. about handing over responsibility. But The User cannot be separated from The System if we are serious about data consistency in the system, and if we do not need strong consistency then these locking mechanisms merely slow down a dynamic system pointlessly in order to equilibrate it.

This suggests that ACID versus BASE debate is no debate at all. It is question of an arbitrary placement of a line or, dare I say, partition. It is just a question of where the limits of the system are defined, -- it all depends on the user. - P is for Partition-Tolerance

"P" is the vaguest of the properties in the CAP literature. The meaning of a partition is clear enough, but what is partition tolerance? Well, it refers to what agents promise to do in the event that they need to rely on information that is located in a different partition (i.e. someone they can't talk to). An agent may be said to be partition tolerant if it promises to deliver a "correct" response to a query within a finite amount of time Δt < Δtmax, even if its response depends on information from another agent in a different partition.

Although this sounds very ad hoc, it is theoretically possible to define a correct response for chosen regions of a distributed system, for every conceivable scenario, as a piecewise function, and get agents to keep such promises. However, these are of course dependent on the definitions of "C" and "A", and the work required to do this is not useful. If we follow a promise theory perspective, or equivalently that of [11], then we have a description where the concept of partitions is irrelevant. Then the only useful thing we can say is that partition tolerance cannot be defined for push based systems at all, because there is no way to know whether equilibration happened.

Where are we?

The CAP theorem claims that a distributed system can have two out of three of these properties, but not all of them at the same time. In other words, according to the theorem, we can have CA, CP, and AP systems.

We can define "A" and to some extent "C", but we also see that the concepts as discussed for databases are somewhat ad hoc, and more interesting is how one deals with the issue of end-user viewpoints. Hickey [11] has pointed out that, if you want to model causation from a consistent viewpoint, without needing to stop the world to do it, a simple model is to build a private spanning tree of the causal process [8]. This was recently used as an approach to `beating the CAP theorem' [11]. And, of course, it is exactly what git does.

What these examples say is that the premise on which CAP is built is itself the problem.

Time and the many worlds interpretation

"If you have one clock, you know what the time is. If you have two, you are not sure."Anonymous

I used the mundane view of time in these definitions to make them workable. As pointed out in [3], agents in a distributed system do not necessarily have a knowledge of a global time; and [3] also discussed whether local time is available. In practice there are no systems without an internal clock (Ethernet, MPLS, ATM, etc need a clock, for example), so one can always measure time intervals Δt needed for "A".

To achieve "C", we said that agents need to be able to coordinate global state by equilibration. This does not require a notion of global time. Each agent only needs its own local time to make consistent computations -- but once users are involved, the collaboration of multiple users around the distributed system requires time labels to make sense to everyone and be comparable.

| Agents cannot use the arrival of transactions themselves as the measure of time, because this is not independently predictable, so there must be a separate clock. |

What information systems need to capture is the law of cause and effect: causes precede effects in a given time-line. The idea here is that the future is a function of the past. The laws of physics are actually formulated in this way (there is a subtlety about functional recursion here, which I will not go into). This does not imply that there must be only one time-line, however.

The End User is responsible

Now you might ask; why do we need to equilibrate all the time? Not all changes to data affect all users and processes at the same time, as different users are interested in different parts of the distributed data -- and this is absolutely right. Version control systems like git, as well as techniques like A/B testing for continuous deployment, make explicit use of this, and create private copies of reality for each user so that it doesn't matter that different users will see different versions. So why all the fuss about consistency at all? Since the end user has ultimate responsibility for using the data ("at their own risk") surely what is important is to make a predictable environment for each user to enable him or her to deal with the information available in whatever way suits them best?

This is essentially the argument in [11,8] and this mode of thinking has a long history (no pun intended) in science. By assigning each computation its own private context, the world diverges into a so-called Many Worlds interpretation, as championed by Kripke in logic, and later by Everett in physics. These worlds need never have to meet, but if they can be re-merged into one another by the process of equilibration again.

Maintenance is equilibration

If our distributed data actually refers to our IT infrastructure, which is the case with configuration management, then all the same things apply. It would be a mistake to think that CAP is only about SQL/noSQL databases. The difference between a data store and an infrastructure is that infrastructure is designed to have certain characteristics over time. Random accumulations of inputs are not. That means we can formulate a policy for state, which is part of the design.

What the CAP discussion tells us here boils down to what I would call Maintenance and Convergence. These are the properties of configuration management that lead to equilibration of relative state.

Policy is also data about the system, but at a meta-level. It can be used to maintain the stability of raw data files that govern the behaviour of the infrastructure, thus in turn equilibrating the behaviour. It purpose is to represent consistency from a slowly varying perspective. We can separate out a predictable part that we can rely on and then automatic processes can run in real time to counteract noise in the system. This is what CFEngine was designed to do and the speed of operation is growing more mission critical by the day.

Somewhere along the way, when pushing out releases became more frequent, we lost sight of this need for continuous maintenance. But this is not good enough to maintain host equilibrium or consistency over time.

| Promise theory tells us that it is not real or virtual hosts that we should think of as the smallest unit of infrastructure but each data and program resource individually. Thus to make several of these work together on a host, they need to equilibrate. That is why regular maintenance is needed to cope with change. |

Mission criticality, even over short intervals, is becoming increasingly desirable for success in a high speed world. Then CAP says that the maintenance or local correctness of your infrastructure depends on the configuration repair system being online and available within tmax. This, for instance, is why CFEngine went from examining its repair schedule every day in 1993, to every hour, to every 15 minutes and today every five minutes by default. Some users are known to execute configuration repairs every minute.

Continuous deployment

In continuous deployments, either in web operations or software releases, rapid change is most naturally handled by allowing different branches of reality to fork off and co-exist until the right time to equilibrate them. If these changes did not split off into their own reality, if we tried to bring everything into a single time-line immediately, that single time line would be completely unpredictable and unusable for everyone. A globally consistent view had better not change too quickly. This is the thinking behind git branches, A/B testing, staging environments, and so on.

How quickly does infrastructure need to respond? Deployments or changes are not truly continuous is a mathematical sense of "functions over the reals" of course. They happen over intervals of time that are quite long compared to the rate at which data can change in our information systems. From 2000-2003 I spent some time examining this idea of data resolution in system administration and developed the models, using CFEngine 2 as the proof of concept, to balance stability (equilibrium) and change. The result is CFEngine's 5 minute model of locally consistent viewpoints that are then reevaluated on each run to adapt to changes in the environment. User who don't run configuration checks every five minutes risk losing consistency due to random changes from users and other systems like package managers. Again, we have to acknowledge that infrastructure is not a transactionally safe system that maintains integrity by itself [15].

Probability of consistency: Push and pull

When engineers still believed that computers were transactional and deterministic, the traditional way of updating them was to use push-based methods like rdist or some kind of package update from a central source. Thanks to CFEngine's investigations in the 1990s, most configuration management systems today use pull based approaches to management so that availability and consistency can be measured.

If we only "throw things over the wall", then every push creates a system partition -- where we cannot observe what goes on on the other side. This is the ultimate parallel universe scenario where the worlds never come back together.

| If CAP says anything about operations, it is this: don't just push data to hosts from a central source: no network shells or distribution mechanisms. The end user client needs to control its own reality in order to have a consistent view of the world even for itself. For there to be global consistency requires a lot more than that. |

What did we learn?

The other terror that scares us from self-trust is our consistency; a reverence for our past act or word because the eyes of others have no other data for computing our orbit than our past acts, and we are loath to disappoint them ... A foolish consistency is the hobgoblin of little minds...--Ralph Waldo Emerson

In the first part of this essay, I have tried to pick apart the original intention of the CAP conjecture and clarify what it can and cannot mean for distributed systems. I have also tried to point out some of the inconsistencies in the idea of consistency itself, and challenge you with the question: how important is consistency to you and over what time scale?

I am keen to underline that CAP is not a theorem, because there is a real danger when rumours and truisms assume the authority of truths about the world, and are used uncritically, often for `techno-political' purposes. The authority in the term "theorem" should not be abused to justify certain viewpoints that can easily be justified by more careful thinking. And a good theorem should be inescapable and basically self-evident. There are indeed truths about systems that can be proven, but CAP is too vague for that.

The original conjecture pointed to three modes of operation which were labelled:

- CA without P,

- CP without A,

- AP without C.

While I showed that these cannot cover the full story of distributed systems, in the vague meanings that were originally intended, they do still represent three identifiable modes of operation for distributed systems that illuminate valid trade-offs. Thus we can still use them as points of reference to discuss systems, even beyond simple databases (which is my original intention with this essay). In addition, there is the deeper question of user relativity that is the root cause of inconsistency.

Consistency is often presented as a property for databases or services, but it also applies as an emergent property for other distributed systems. Database traditionalists, from the privileged pedestal of a tightly controlled environment, will probably roll their eyes at the idea of emergent properties. There is still a hard core in computing who believes in the possibility to control absolute determinism [14]. But as distributed systems and their infrastructure become larger and faster, we need to get out of that delusion as quickly as possible. Relativity -- i.e. the difference in viewpoints resulting from separation in time and space between user and data -- makes consistency more complicated than the simple stories of the past, and as the rate of change in large IT increases, the effects of relativity become more of a headache.

This first part of my essay only scratches the surface of what consistency and availability mean to distributed systems, but now that we have some of the background on record, for the second part I want to think about a larger issue of what this means for the greatest distributed system of them all: our IT infrastructure.

Acknowledgment

I am grateful to John Allspaw, John Willis and Diego Zamboni for helpful remarks on a draft version of this article. They are, of course, absolved of any blame regarding the content. I would also like to thanks Ted Zlatanov for suggesting the topic.

Valuable reading on CAP theorem

- Eric Brewer, Towards Robust Distributed Systems?

- Eric Brewer, CAP Twelve Years Later: How the "Rules" Have Changed

- Nancy Lynch and Seth Gilbert, Brewer's conjecture and the feasibility of consistent, available, partition-tolerant web services, ACM SIGACT News, Volume 33 Issue 2 (2002), pg. 51-59.

- Daniel Abadi,Problems with CAP, and Yahoo?s little known NoSQL system

- Michael Stonebraker, Errors in Database Systems, Eventual Consistency, and the CAP Theorem

- Henry Robinson CAP Confusion: Problems with "partition tolerance"

- Coda Hale You Can't Sacrifice Partition Tolerance

- Nathan Marz, How to beat the CAP theorem

- NoCAP - Part II Availability and Partition tolerance

- Armando Fox and Eric Brewer, Harvest, Yield and Scalable Tolerant Systems (1999)

- Rich Hickey, Are we there yet? (2009)

- M. Burgess and J. Bergstra, A Foundational Theory of Promises (2004-)

- D. Aredo, M. Burgess and S. Hagen, A Promise Theory View on the Policies of Object Orientation and the Service Oriented Architecture (2005-2006)

- M. Burgess, Three myths holding system adminisration back...

- M. Burgess and A. Couch, Proof that rollback is a "total" fiction.