'proximations

A talk presented at Amsterdam University on 20th June, in honour of Jan Bergstra's 60th birthday.

A picture of me is not me, and yet it suffices for many purposes as a

representation of my qualities.

A picture might itself not be

perfect. It could be blurred, it is certainly only two-dimensional, and it does

not move, but we understand these limitations and accept them because

they are suitable compromises for many intents and purposes. Unlike

the genie in the bottle, I do not have to appear in person every time

someone thinks about me.

Why can a likeness be good enough? It often suffices because we are not generally interested in every aspect of a thing at one time. There is also a cost involved. It could be significantly cheaper to represent me as a black box in a game of strategy, than to fashion a perfect statue with my likeness. Moreover, we might learn something by an approximation: replacing an exact likeness with something more generic means we have identified a pattern that connects a single instance to a wider family of things.

Let's hazard an approximate definition of approximation:

This definition will not be satisfactory to everyone. It might not cover all aspects of approximation that you want to know about, but let's just say that it suffices for the purposes of this talk.

What I would like to observe in this talk is that approximations (representations) are all around us in every field of work, especially in the so-called `exact sciences'. For a scientist like Jan, approximations are something to be used creatively and faced frankly -- and this can often lead to important insights about theory and practice. Approximations are part of a larger issue that is facing a widening area of natural science today: dealing with incomplete information. Much as we would like to know everything in absolute detail, there are fundamental limits to knowability, and science has to make sense of these.

By the end of this talk, I'll tell you about an example where attention to such detail by one researcher (Jan) reminded someone else (me) of -- and independently confirmed! -- a fundamental issue in error correction that I have been interested in, relating to IT management, with very practical consequences.

More common than you think

Approximations are everywhere. They are much more common than most

of us imagine. Moreover, we are often taught to accept that they

represent the truth. For example: we typically round numbers up to the

nearest power of ten: sixty becomes a hundred, six becomes ten,

etc. Today, a whole generation addicted to MP3 encoded music believes

that the sound quality of MP3 is very good, despite the fact that it

is heavily filtered, compressed and approximated to optimize file size compression

(an approximation based on cost). The experience of live music is frequently

shocking today, as we are so used to hearing compressed sound in media.

In natural philosophy or science, the theory of errors and observations (attributed to DeMoivre and Gauss) has led to the modern theory of statistics and is an accepted part of dealing with uncertainty brought about be approximate observations.

More surprisingly for many, calculus itself, as per Newton and Leibnitz, which takes the infinitesimal limit approaching infinite resolution, is generally thought of as being exact -- precisely because it has infinite resolution. However, this is not an accurate representation of the real world, which we know not to have infinite resolution. It is only part of what is rarely referred to today as the `continuum approximation'. Calculus leads to simple rules and neatly defined functions that bring an elegance to computation with real numbers, but these functions do not truly exist in natural phenomena with infinite resolution.

Approximation and verification

An approximation is only a partial mapping then. There is inevitably something incomplete about it, even though there is often great scope to choose what qualities we would like to preserve.

How do we tell the difference between an approximation and an

original? Clearly we have to measure it. That requires sampling both

at a particular resolution. The subjectivity of the observer (who

makes the comparison) now comes into play.

Fourier's spectral decomposition of functions together with Nyquist's theorem tells us how careful we need to be in order to be able to see simple changes, rise and fall in functions. In order to see a change in the original, we have to sample it with at least twice the frequency of the equivalent component in the Fourier decomposition that represents the change in order to see it.

Recalling music once again, Fourier's analysis is used by engineers to test the fidelity of sound equipment: by sending in a square wave (which has an infinite of frequencies in its Fourier series) and comparing it to what comes out, one often sees `ringing' or overshoots at the corners (as in the k=11) image above, indicating that certain frequencies have gone missing.

Of course, square waves don't truly exist either, since they require infinite frequency components (and hence infinite energy cost) to make them, so the original input was itself only an approximation (like k=49).

Information gone missing and misrepresented

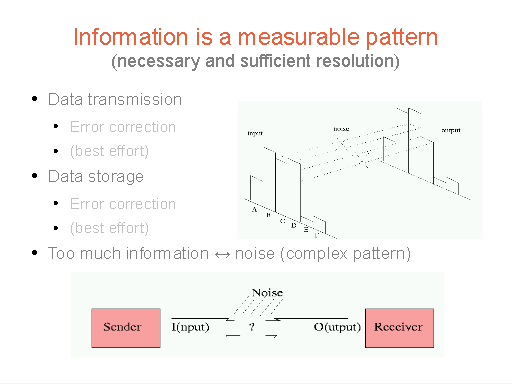

In 1948, Shannon published his theory of communication (today

referred to as Information Theory), in which he commented on the

meaning of information as an approximation process. All represented

information is basically digital as it must be classified into

separate categories by human observers (as Francis Bacon taught us),

deciding which side of a line on a measuring stick a measurement

belongs to. He went on to discuss what happens beyond digitization if

information is copied from one place to another. Copying data it

itself an approximate process, because noise from the real environment

brings distortions that have to be undone or corrected, and worse than

that -- the transmitter and receiver might have different resolutions.

The upshot? Some kind of approximation is needed.

To correct errors we often want to try to undo them. The sense of this is a subject that both Jan and I have studied quite independently, and I'll get to that in a while. Perhaps we should simply note here that Computer Science, often treated with pure mathematics, is not immune to these issues of approximation. Every data operation in a computer requires a copy of bits, which in turn is subject to noise and the need to correct errors at often great expense. We deal with a world of incomplete information.

Information, information everywhere, but not a dropped bit

The focus on information is not particular to computing. Science

everywhere is coming around to the idea that the world around us is

not part of the clockwork, deterministic vision of Newton and his

disciples. Scientific theories increasingly rely on statistical or

probabilistic notions in an attempt to model either intractable

complexity, or literally unknowable information as in Quantum Mechanics. What has come to be

known as Natural Laws are retreating from the certainty of describing

facts, to something more like making a best-effort promise, to even just `let's wait and see'!

Ultimately our belief in scientific law is a matter of trust. And as the Russian's proverb goes: trust, but verify! The verifier is now centre stage, and he or she is a subjective individual. This is practically amounts to a crisis of identity for modern science.

Promising expectations

In 2004, when Jan and I first met, I was thinking about these problems in relation to computer configuration management (for a summary see [1]). I came up with idea that a useful way of describing real scenarios is to use a specialized representation (or approximation) of the concept of a promise. We have since worked on a theory of such things for the past 8 years as an enjoyable way of passing time in Utrecht's cafés. Today this way of thinking is, I believe, going to be increasingly important in the practical use of computers -- data sizes have reached the limits of assumptions built into error correction algorithms. Computer memory (RAM) only works, in a bath of cosmic rays, because its errors are corrected transparently by unseen algorithms, but if our real computers obey the laws of physics (and they do) then we have to confront real world issues that ought not be approximated away.

All computers are analogue computers

Remember analogue computers? Before digital computers were cheap enough for mere

mortals, one could solve certain differential equations using electrical circuits.

LCR circuits (L is inductance, C is capacitance, and R resistance) behave like

integro-differential equations. Capacitors accumulate or integrate, inductors respond

to rates of change or differentiate and resistors tune the input voltages. By setting

up an analogous electrical circuit, one could solve a differential equation by

measuring voltages. Over time the `analogy' got forgotten and analog(ue) has simply come to

mean not-digital.

This is wrong of course. Even digital computers work by analogy. They use electrical circuits with approximately digital behaviour. Then by throwing away information, these pseudo digital circuits can mimic digital behaviour. So, if physics can mimic computation, is it true that every physical process performs an analogous computation? If so, that should include `management'.

Physics computing the future

Every physical system represented by an approximate difference equation, may be thought of as a computer, calculating the values of some observables. The world we live in is simply the output register of this massive computer. One can map the typical elements of a differential system approximately onto an Turing Machine (as in the slide), with initial conditions, transition rules (the solutions of differential operators, e.g. often represented as Green functions).

Although the differential system has infinite resolution, this is a

flaw not a benefit of its representation. A typical computer, which

approximates a Turing Machine, has finite resolution - and more often

systems are simply being simulated on such machines rather than

looking for nifty art to solve differential equations with boundary conditions.

So it is true that analogue computation and digital computation are

both forms of computation, each with approximate

representations. Moreover, the real world (which we should not

rightfully call an approximation to anything except itself) is

certainly doing something that bears all the hallmarks of computation, and we

use that property to build computing machines that simulate Turing machines.

But there is another side to real computers that is not obvious in any of this:

a completely separate program that is being executed -- and that is the one

that brings about intended changes to the programs and software of the computers.

The `management' of the system; call it `system administration' or `IT management'.

It is the world of installing software, taking backups, clients and servers,

and it too mimics a computation.

Division by zero

A couple of years ago, Jan and I were walking through the streets

of Utrecht on a rainy afternoon. Jan began telling me of his interest

in revisiting the issue of division by zero and how the inability to

divide by zero was a source of many problems in computation[2].

At the time, the discussion went largely over my head, being neither my field nor having thought about it before. On the other hand, as a physicist I have long experience of issues with dividing by zero in complex analysis, and making sense of calculations where such problems can occur. All I really remembered from this first conversation was that I was fascinated that someone was willing to study such a problem today. Weren't these problems all decided centuries ago by men with curly wigs? I have great admiration for anyone who can ignore dogma and ask a pertinent question. As a student I always found it fascinating how a succession of different `truths' (lies) is presented at different stages on one's career.

We are all taught that you simply can't divide by zero. Then we are taught that the answer is infinity. Then we are taught the answer is `indeterminate', then that dividing by zero is essential to compute certain integrals where the sum of the residues is actually the legitimate answer. The story goes on in modern physics with methods for `regularization' and `renormalization'. But as far as I knew, no one has looked at this issue from the viewpoint of computation rings. This was what Jan was looking at together with John Tucker.

What stuck in my mind was the feeling that their solution(s) to division by zero had to be related to an analogous problem I was concerned with in IT management: that of undoing human errors or `rollback'.

The strange case of zero and the missing information...

Rollback is a term coined in database transaction theory, in which carefully monitored transactions can be reversed or undone by rolling back a kind of transaction log. The term was later usurped by system managers for any kind of `undoing' of mistakes in quite uncontrollable environments however. I have always asserted that such rollback is impossible as it is based on incomplete information.

However, I have long been interested in the idea of ZERO as an operation. Zero is a unique and important object in mathematics. It is an identity operation under addition, but it is a fixed point under multiplication. It takes any input value and transforms it into the same zero value. Herein lies a desirable and an undesirable quality:

- Zero brings certainty of outcome: no matter what we give it, it gives us a reliable and predictable answer: 0. It is a patron saint of certainty! It can therefore be used to enforce a policy on a system: just calibrate what you want to zero, and you can get there from any initial condition easily and repeatably. It is like a GPS finder -- no matter where you start, it will get you to your destination.

- When trying to undo this operation, it leaves a conundrum: which of those many possible values should it return to us when trying to undo the operation? Without additional information, how shall we get back to where we were. An obvious answer is to try to remember where we were, but that is only practical if you know that you are only going to use the zero once.

To bring predictable management to systems, I developed a set of operations like a GPS for system state in the CFEngine software, to implement predictable policy enforcement to computer systems that behaved like multiplying by zero. No matter what state you start from, these operators bring you back into compliance with a desired state (calibrated to a `zero'). Before this, system administrators used to achieve predictability by demolishing systems and rebuilding them in order to start from the only `zero' they knew.

The question of what happens if one makes a bad decision in choosing policy and wants to go back to what came before has been a persistent point of contention by users. And this is exactly the question of how do you divide by zero!

It turns out that the same kind of question occurs in

the physics of phase transitions too. Going from an

arbitrary complex initial state to a frozen ordered state,

or condensate is like multiplying by zero. Bose-Einstein

condensation and superconductivity are states like this. But what happens

when we try to reverse a condensation? Evaporation will not restore the exact

state prior to freezing. The only way forward to map the new disorder onto the

old by approximating the macro-state of the system. I could talk about this for

a long time.

To cut a long story short, Jan's theoretical work with John Tucker uncovered the same solutions I had proposed for recovering from poor choices of policy (like getting back to where you started from in a GPS journey). You can try to remember what your last state was (but that depends on how you define where your journey starts and ends), or you can say: forget about the past and just go forward or stay where you are and reevaluate the future, i.e. dividing by zero gives zero, just like multiplication. Then you go forward with predictability.

I won't go into more detail here, as this has been described many times. I simply want to end with a comment about fundamental research.

The end is the beginning?

Many people are sceptical of what seem to be impractical, abstract or `merely theoretical' questions in research. Those researchers who try to ask such questions are never thanked very early for their conviction. But this story shows that such theoretical questions often have very practical applications if only one takes the time to look for them. These questions might never have been answered without such an attention to detail and a sometimes stressful disregard for public popularity.

An abstract investigation can be thought of as an approximation to a class of real problems. The fact that you don't know what it approximates to begin with doesn't matter at all. We get there in the end.

A few weeks ago I was reading another possible association to division by zero. Roger Penrose has written a lot about the origin of the universe and its ultimate end. The origin-moment of the Big Bang was a time before which there was not supposed to be any information in the universe, but Penrose rightly points out that the second law of thermodynamics causes a problem for our current understanding of the world. According to the second law, the amount of information (variability or entropy) is always increasing. So near the beginning there should have been very little information, i.e. the universe should have been very ordered. Modern accounts of the universe rely on `randomness' however, which can only be explained by the presence of a lot of information. Where did that information come from?

The evidence today shows that the universe will not likely collapse back, but will continue to expand onwards and outwards. However, Penrose makes a wild speculation that by mapping the ultimate sparse end of this universe onto the beginning of a new one, using a conformal transformation one might see the whole process be continuously self-sustaining: a closed loop of new universes as re-scalings of old ones, in which the information is handed down to the next generation. It is like mapping 0 → 0-1 at the end of time.

I think there are many problems with this idea, and it is literally far fetched, but his point about the entropy is still valid. And this opens up fascinating new questions. We should celebrate the asking of such questions, whether they bring immediate answers or not. The questions themselves are valuable, and on the occasion of his 60th birthday, it is a great pleasure to honour Jan as a person who has the moral conviction to ask such hard questions, even when the personal cost of answering them can be high.

Postscript

The theme in this talk was approximation and its many forms. Approximation is a powerful tool -- not something to be embarrassed about as it is often presented. Computer Science like many other sciences has to face up to to be spectre of approximation more directly than it has in the past. Approximations can be relative or absolute: skirting around partial information loss or replacement, or advocating a complete reset.

References

[1] Mark Burgess, Testable System Administration (Journal of the ACM.)[2] Jan A. Bergstra, John V. Tucker, Division Safe Calculation in Totalised Fields, Theory of Computing Systems / Mathematical Systems Theory - MST , vol. 43, no. 3-4, pp. 410-424, 2008